This is being cross-posted and was written by Joff Winks for his blog. Joff is the composer of the music being created for Arctic Awakening. The original blog post can be found here.

Adaptive Music In Video Games

As gamers, we're all familiar with the concept of adaptive music in games. For instance, we encounter a combat scenario, and the music changes to a more intense composition. We're triumphant, and the music registers our success by playing the victory theme.

Switching between two pieces of music is commonplace. But what if we apply the notion of adaptation at the compositional level, dynamically changing the melody, harmony or instrumentation? It would then be possible to sculpt a piece of music to fit the contours of a scene, where the player is responsible for making narrative choices and the timing of events.

From the beginning, making music that is reactive to and synchronised with a player's choice has been at the core of our thinking. But more than this, we strive for a captivating soundtrack, music that immerses you in the wild, mysterious world of Arctic Awakening.

In this blog, I want to share some of what goes into writing, recording and controlling Arctic Awakening's music. Here's a deep dive into how I approach the technical demands of adaptive music in video games with an example from episode 1. Enjoy!

Sections: Form & Function

Different music forms have been used for centuries by composers to establish structure and hone composition skills. For games, though, the form of a piece starts with the scene. The structure of the game scene lays out what the music needs to do and when.

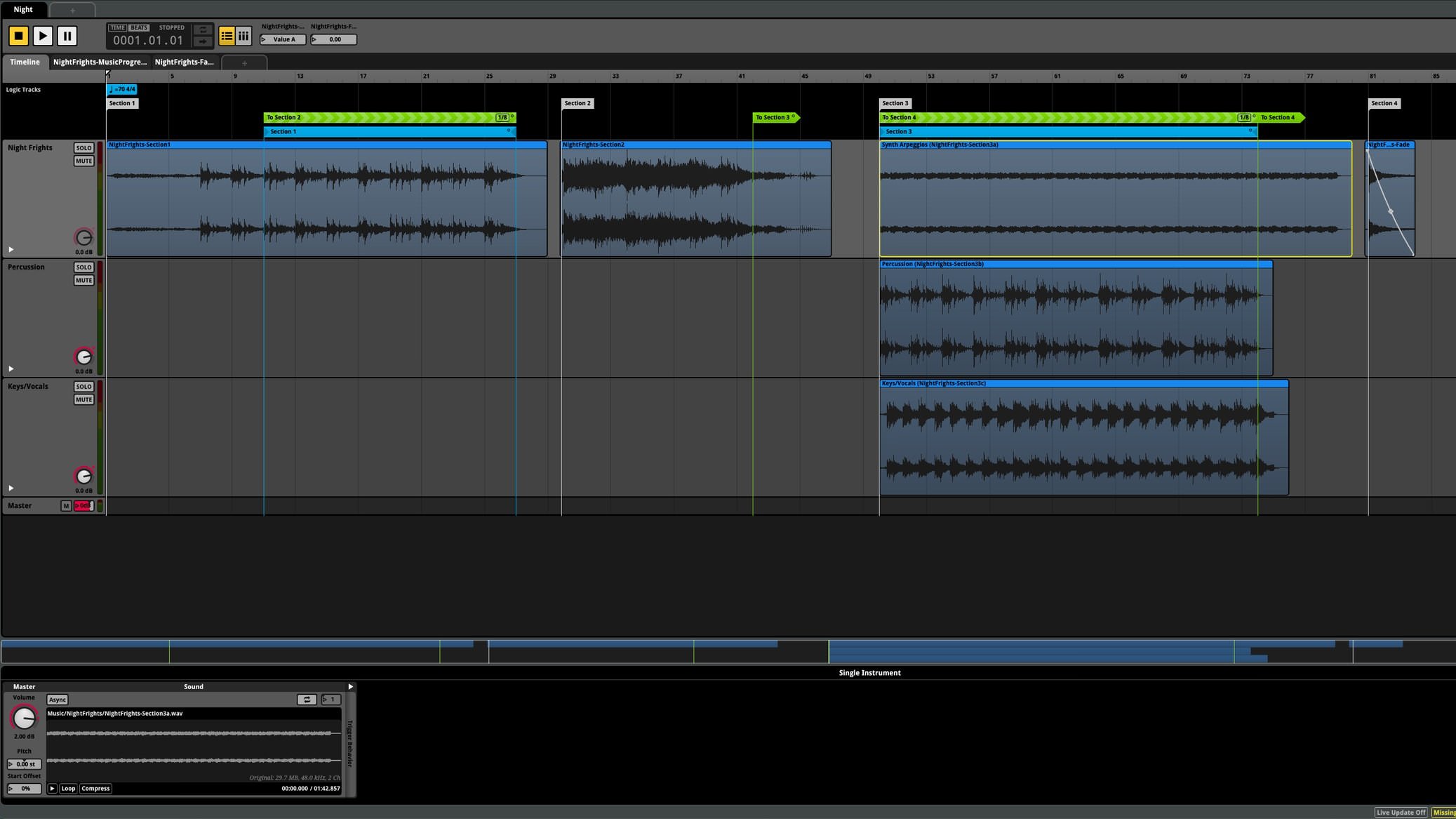

For example, I've identified four sections from the scene Night Frights that set out how the music progresses:

- Wake

- Alfie's Lantern

- Search

- Return to Plane

The composition has four sections to match the scene's structure.

When these sections are activated depends on the player, so discrepancies in the timing of triggering events are inevitable.

Looping

To accommodate the variation in timing, I split the composition into looping sections.

A good loop is a thing of joy! They're tremendously satisfying to make and very useful when the length of music required is undetermined. Effectively, once the play head enters a loop, the music can play indefinitely until the player triggers the next section.

The critical point in any loop is where the end meets the beginning. During this transition, any natural decay (the time it takes for the recorded signal to fall from 100% to 0%) at the end of the loop is interrupted when returning to the beginning. At this point, a noticeable artefact is audible, and unless the natural decay at the end is allowed to play out, the loop point is obvious.

To remedy the problem, I record all sections separately, allowing the instruments and effects, such as reverb and delay, to fade to silence. Then in FMOD, I use the transition timeline to crossfade the end of the loop with the beginning.

Using a crossfade creates a seamless loop where the reverb tails and natural sustain of instruments at the end of the loop play underneath the beginning as the play head makes its next pass.

Transitions

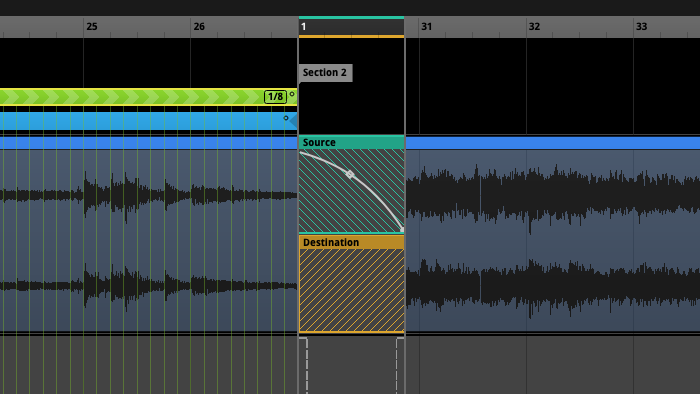

Whilst looping deals with the issue of undetermined duration, transition regions in FMOD manage the shift between sections.

Getting a smooth transition between the parts requires careful writing. Consider the chords of the first section - they need to work with those of the second. Any harmonic progression must make sense from measure to measure and on different beats within bars.

Whether on individual beats, smaller subdivisions or the beginning of bars, when the music is allowed to transition affects how quickly the music can progress. For instance, if the composition can only transition every 5th beat, then at a tempo of 120 bpm, the maximum time between transition points would be two seconds. Not a lot of time but enough to make synchronisation problematic in certain situations.

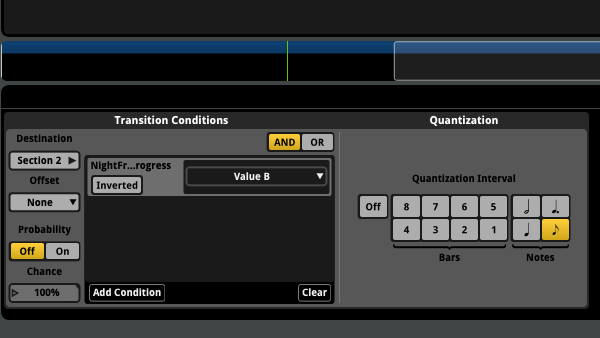

Using FMOD's quantisation feature allows me to set the number of bars, beats or smaller subdivisions between transition points with smaller values like eight notes making a more agile transition.

Sometimes the transition between sections can happen slowly with a long smooth crossfade, but the first music transition in Night Frights occurs in sync with Alfie activating his lantern, which happens in the blink of an eye.

Setting the quantise value low makes sense in this situation, but the design of the composition also plays its part here.

Composition

I spend a lot of time experimenting with compositions making many mock-ups before settling on a final idea.

Getting adaptive music in games to work as intended requires lots of testing. Night Frights, for instance, has been through several revisions, and each redraft has gotten me closer to my goals whilst simplifying and refining the writing. I'll break down the sections with illustrations from the score.

Section 1

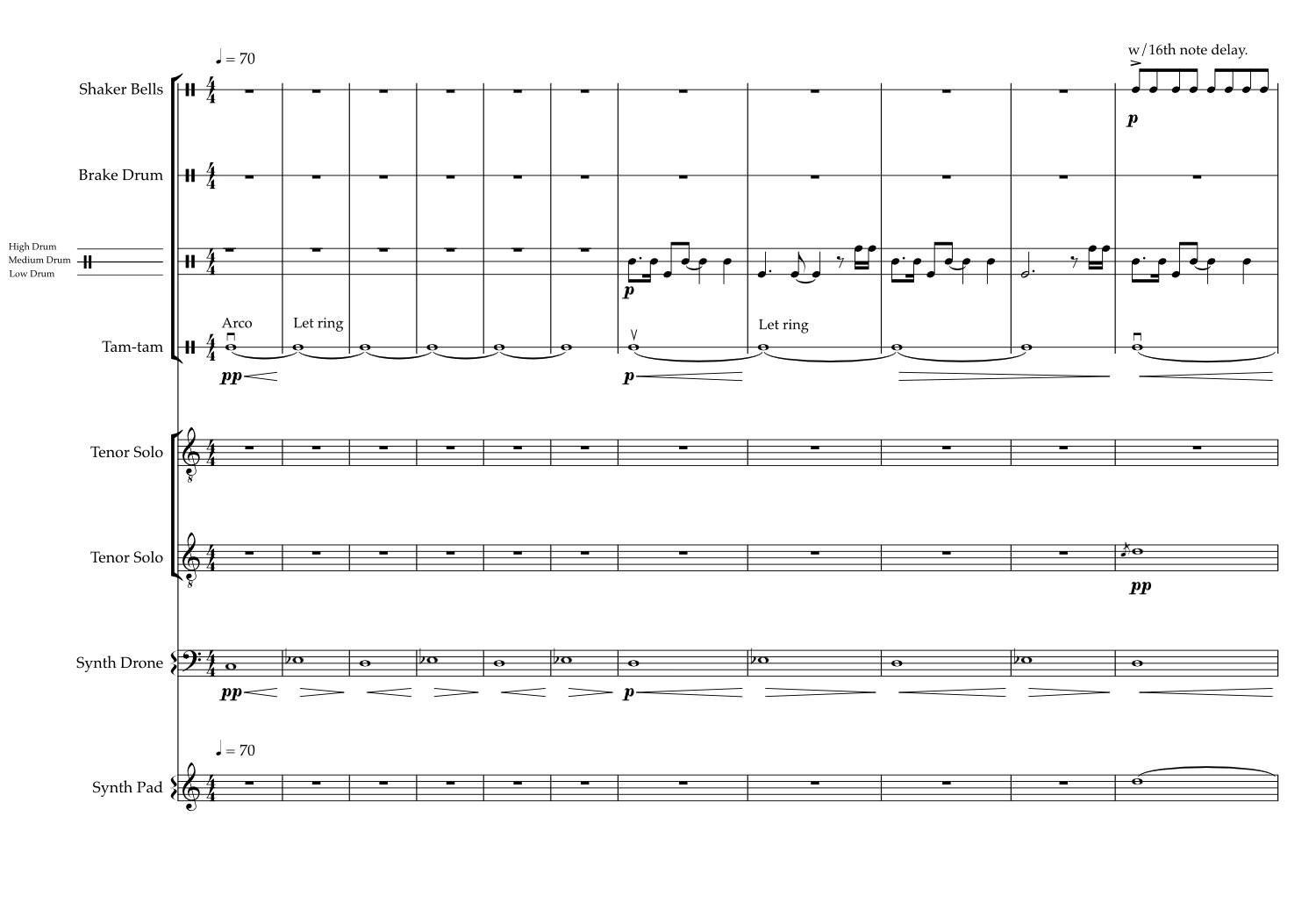

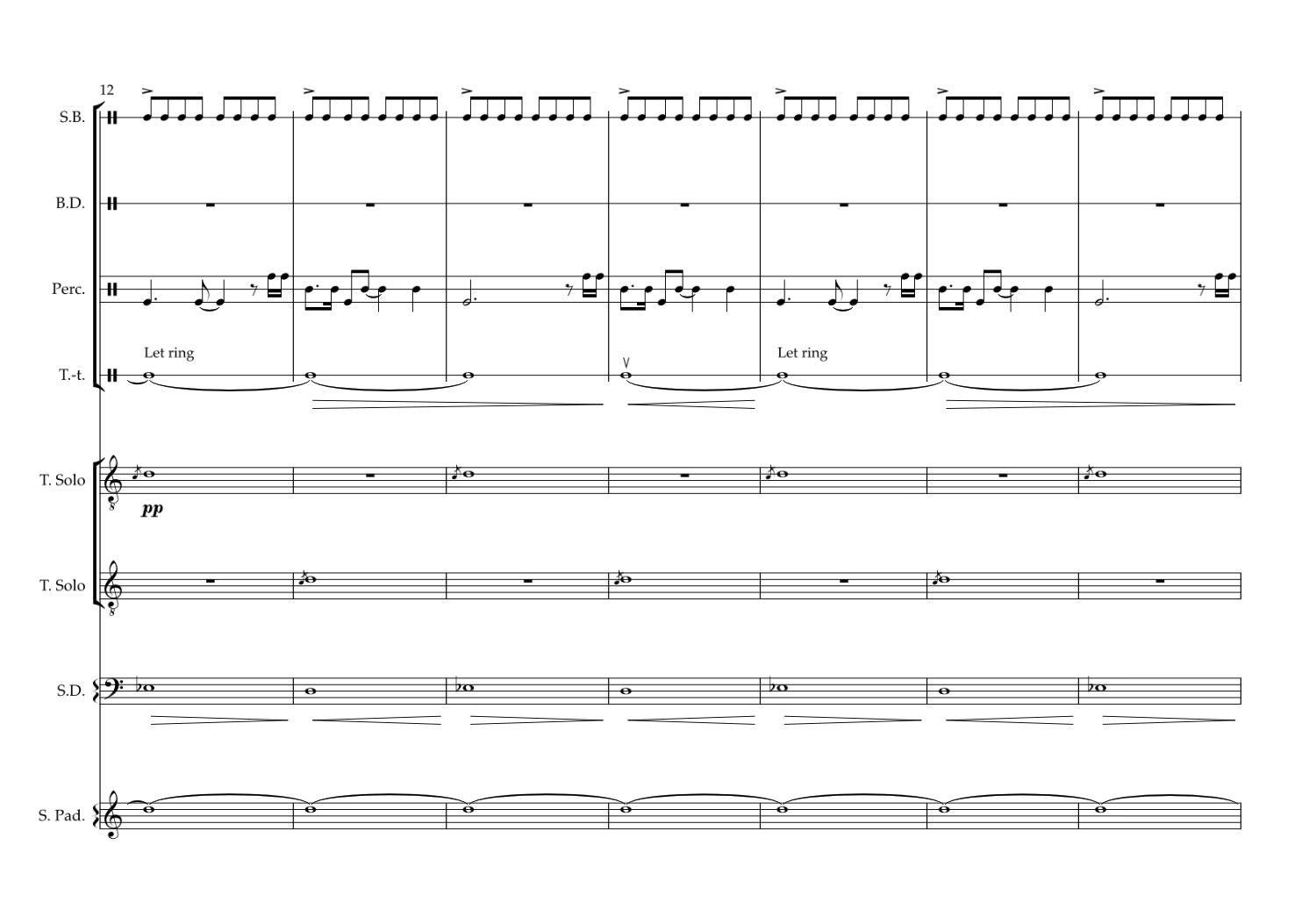

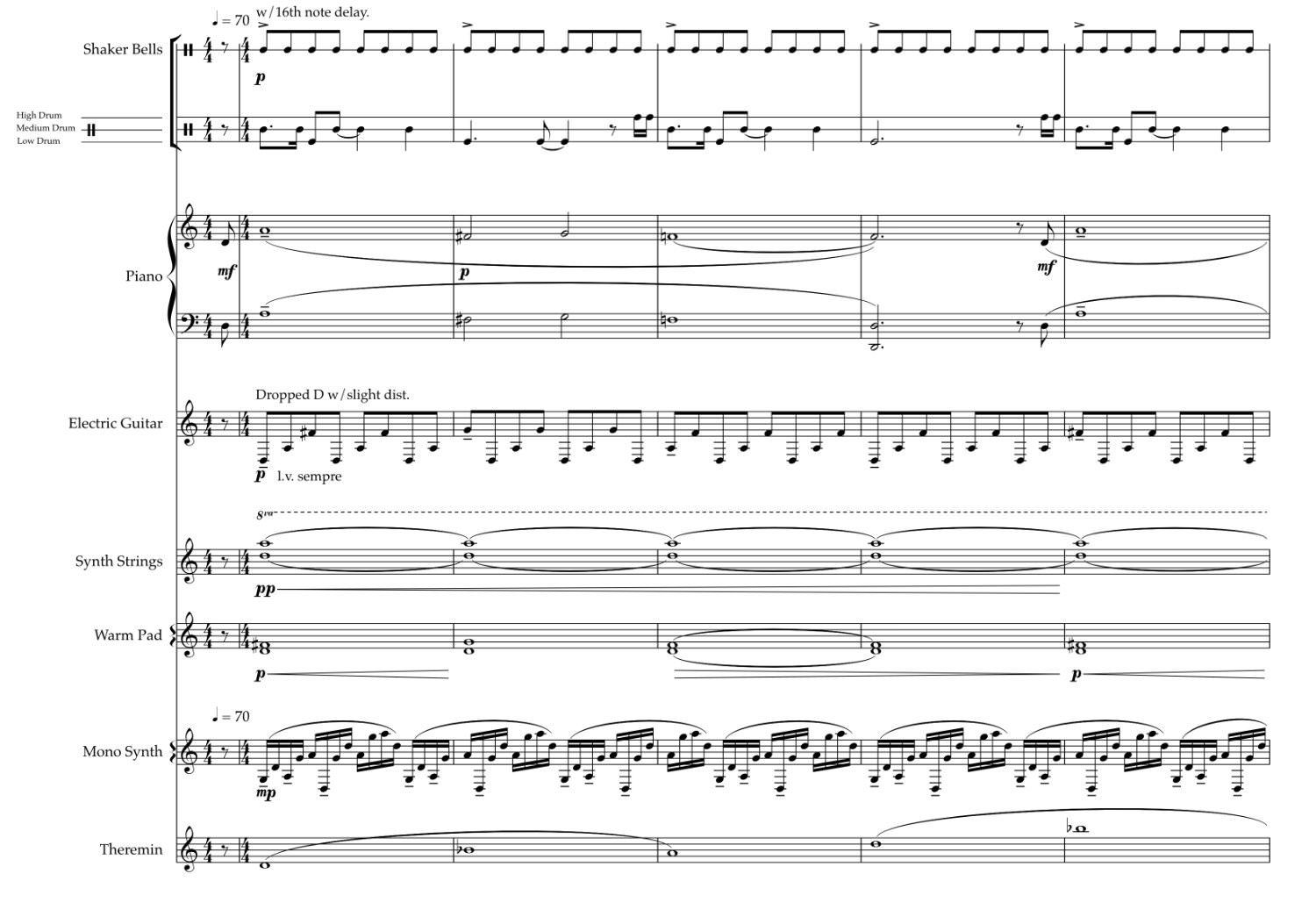

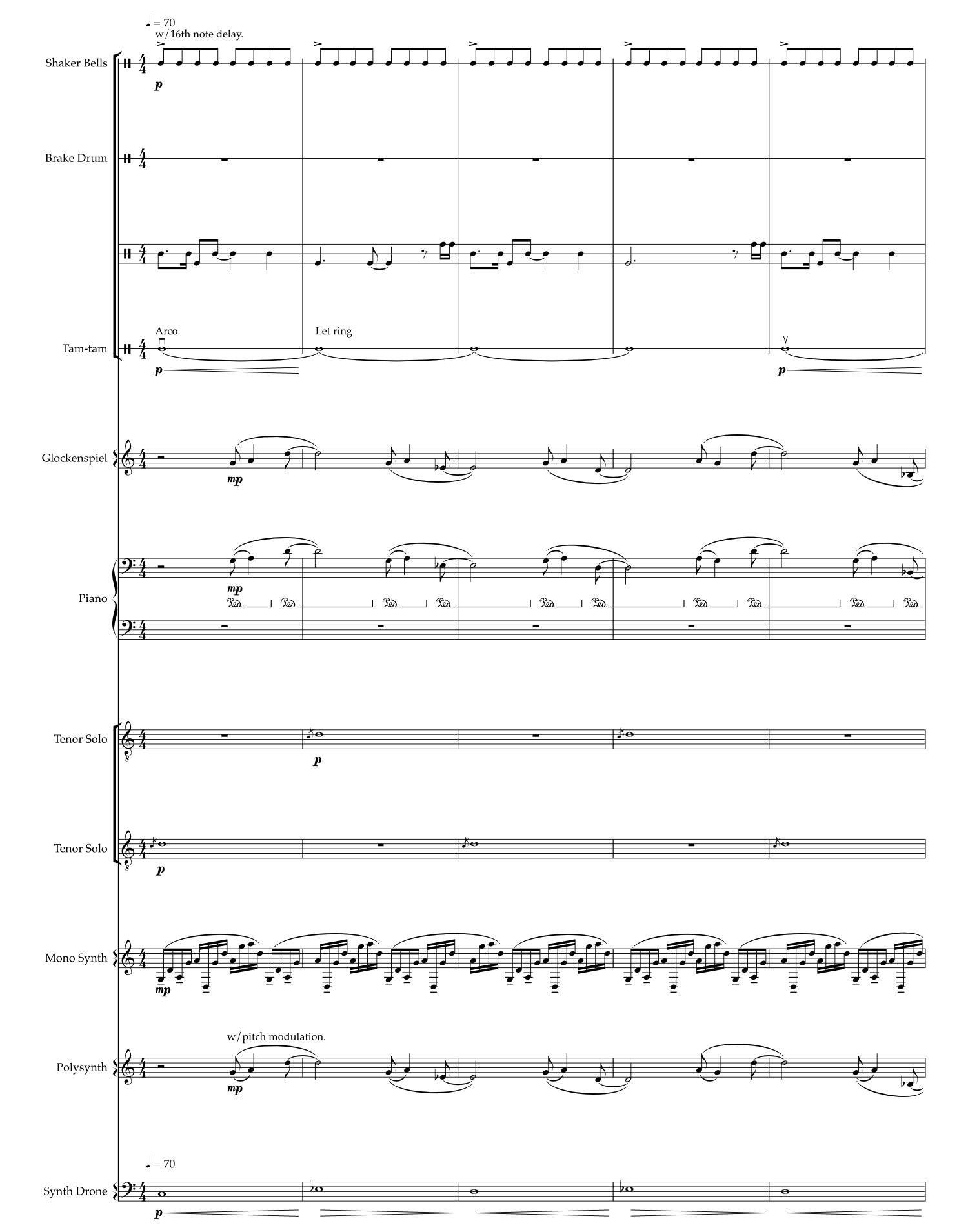

Night Frights begins with a subtle percussive bed. A low drum ostinato and shakers form the rhythmic texture above which a synth drone and eerie vocals sit.

With space between the low rhythmic pulses and the shaker pattern on the 16th note, the section triggered by Alfie's lantern lighting up can jump in on any quaver beat without feeling too abrupt.

Section 2

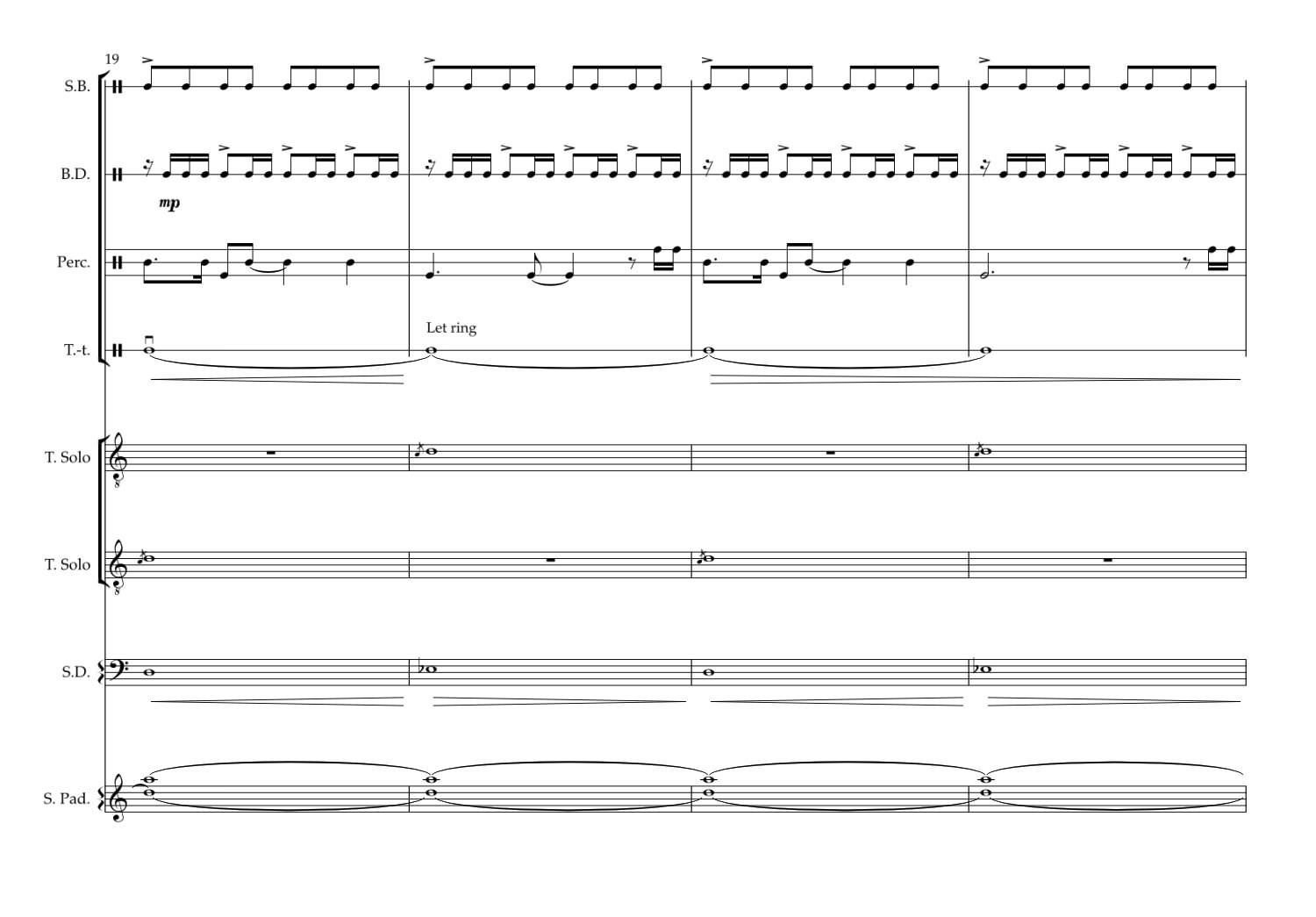

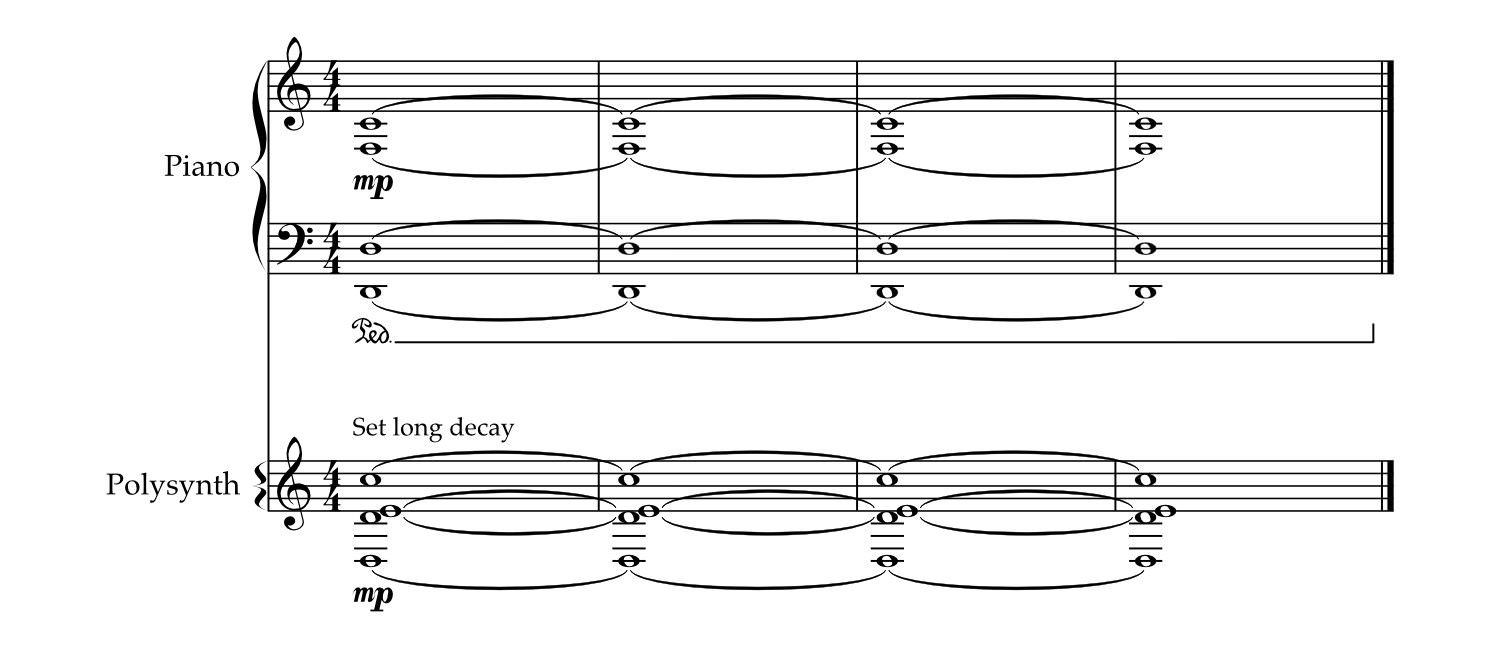

Sometimes, with a quick transition like this, I use a stinger (a short musical phrase or sound) to sweeten the exchange of sections. But in this case, I've written the piano melody to start with a quaver pickup. Here's a quick look at the score for section two:

Because the piano note precedes the first bar of section two by a single quaver note, it lines up with the 8th note in the shaker part of section one. When the guitar and synth come in on the downbeat of section two, it feels like a natural progression, and most importantly, the transition is agile enough to sync with Alfie lighting up.

Section 3

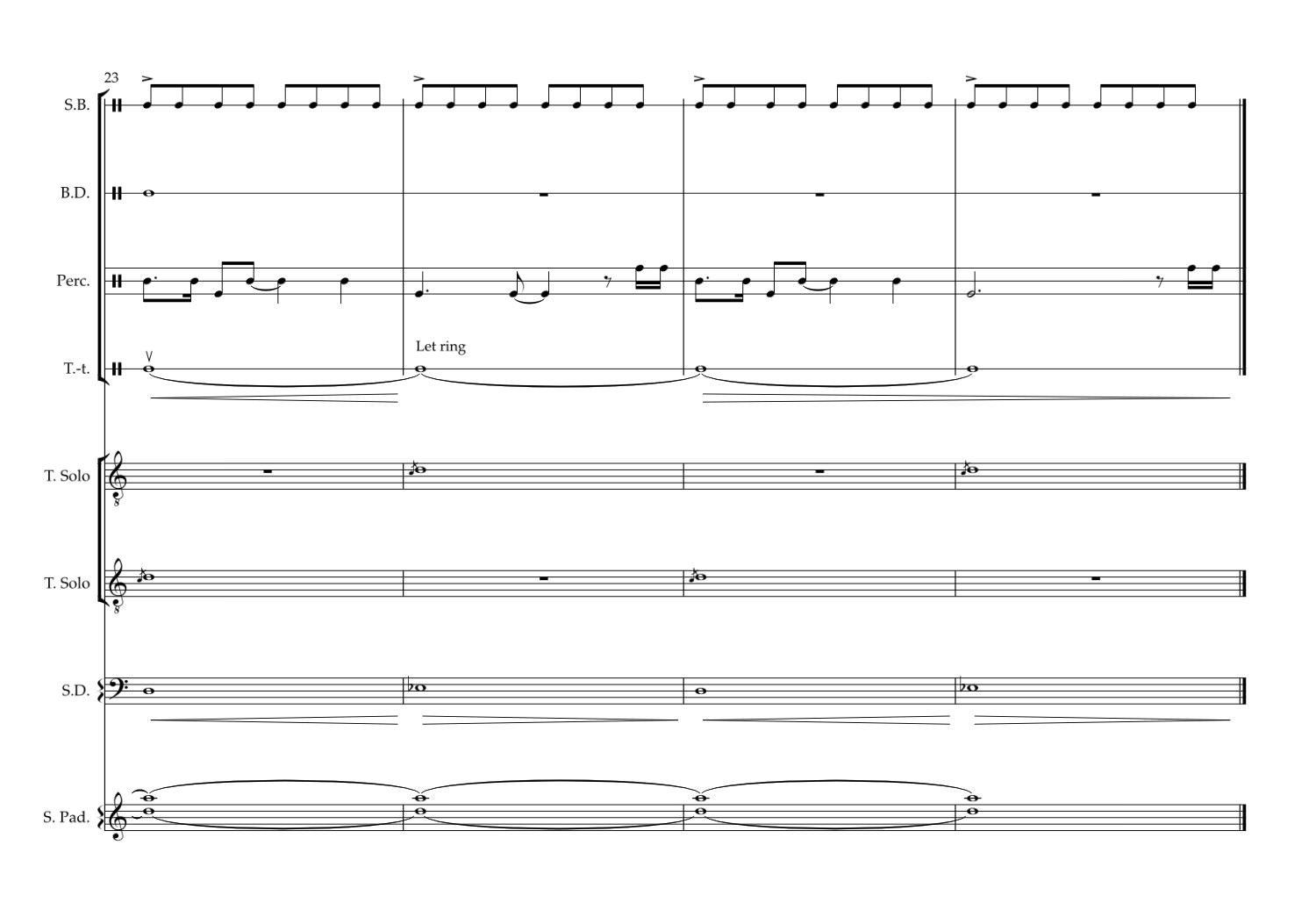

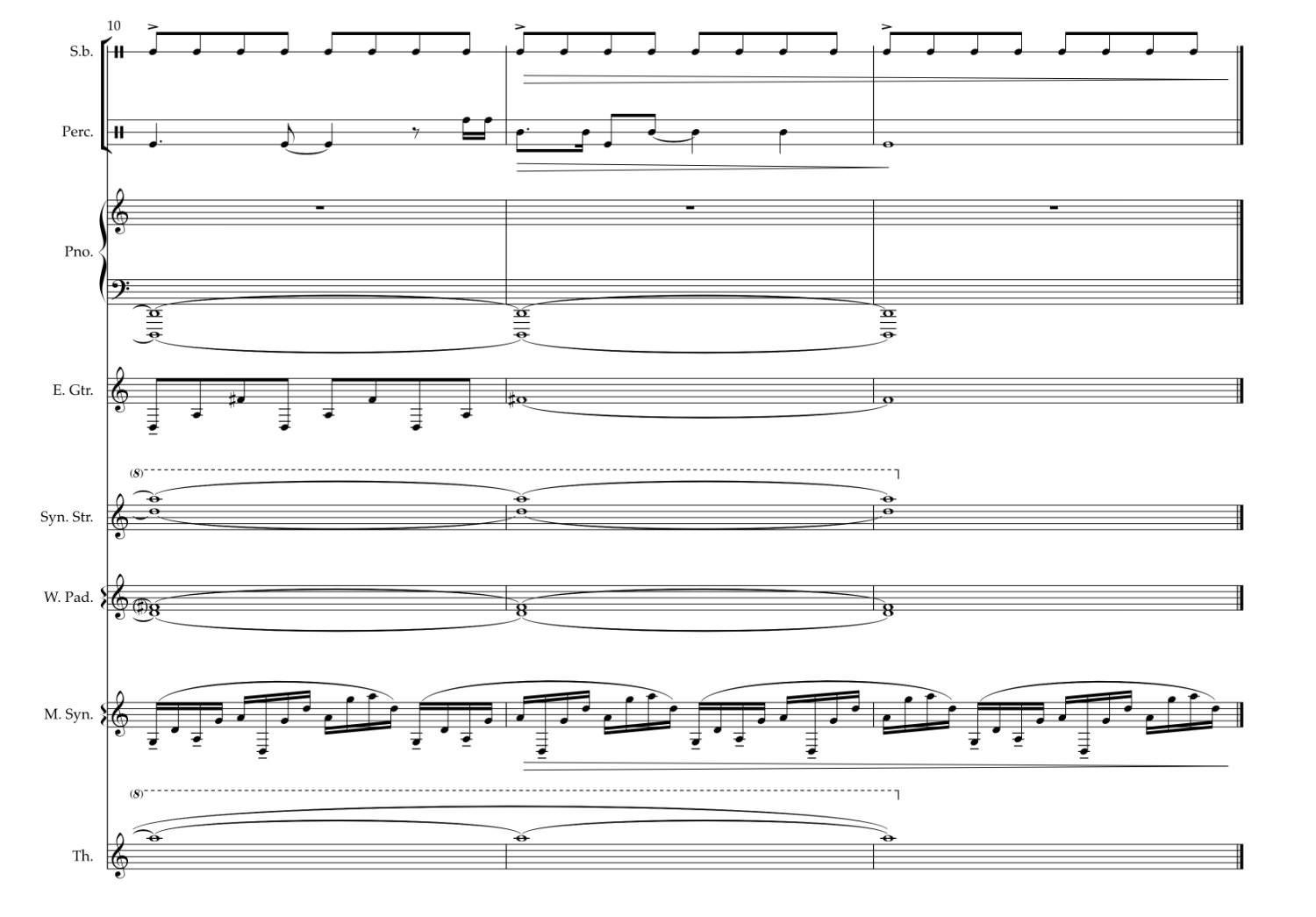

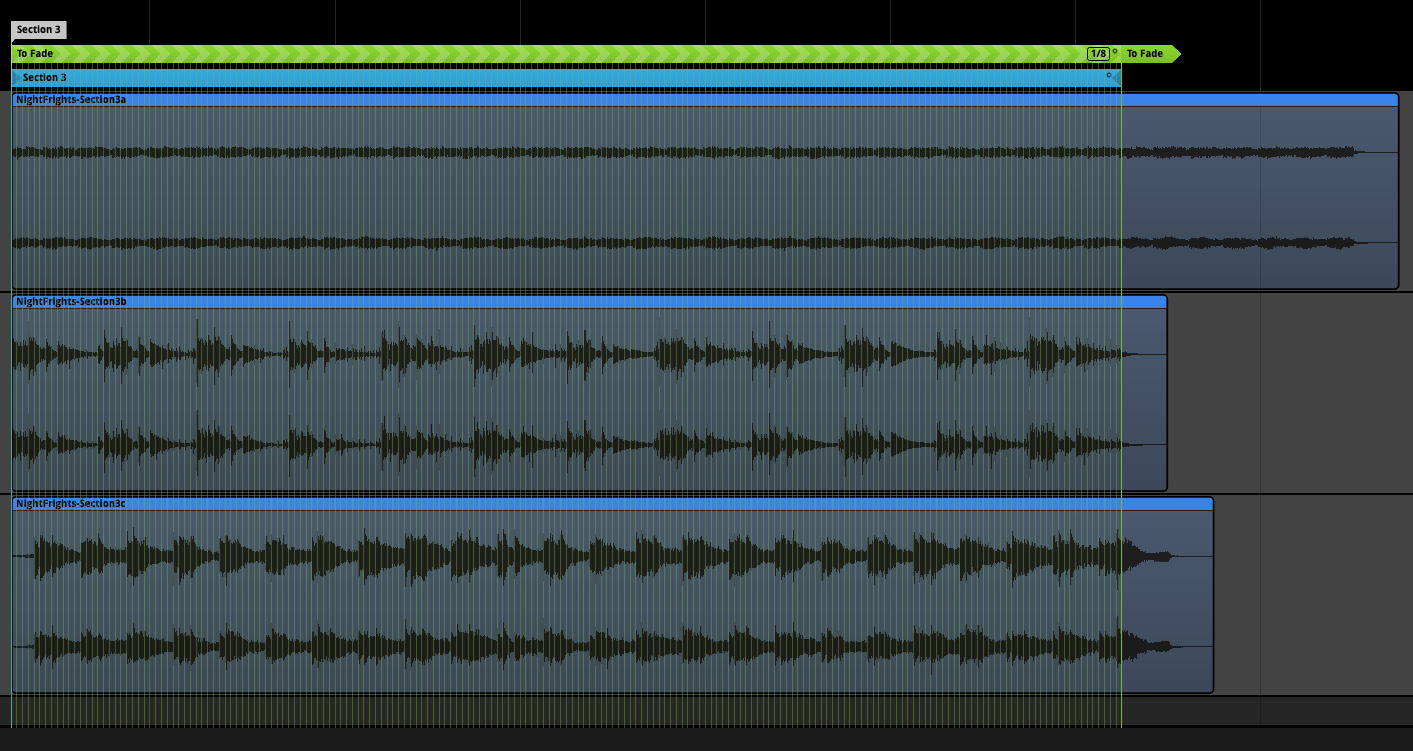

Section three is slightly more complicated than the others. I've rendered it in three separate stems: keys/vocals, synth arpeggios and percussion.

This design gives me control over the relative levels of the instruments in the arrangement. The piano and glockenspiel, which play a long angular melody and percussion, can be controlled separately from the synth arpeggios.

By splitting the instrumentation into three groups, I can dynamically alter the volume of a particular instrument group relative to others.

Dynamic Fades

Alfie is once again the trigger for this to happen. After giving Kai the all-clear, volume fading of the piano melody and percussion begins. Tucking the dissonant elements underneath the synth arpeggios reveals the consonant sound of the synth, which helps signify that danger has passed.

In this video clip, you can hear the music fade after Alfie gives the all-clear.

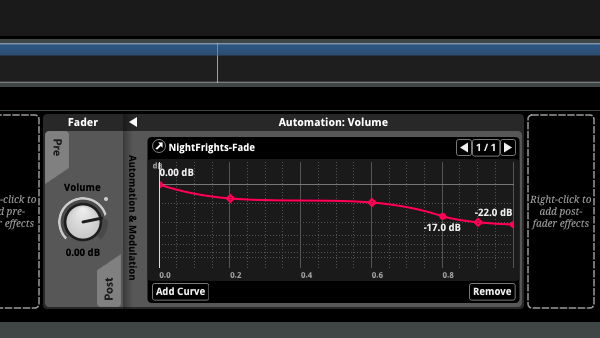

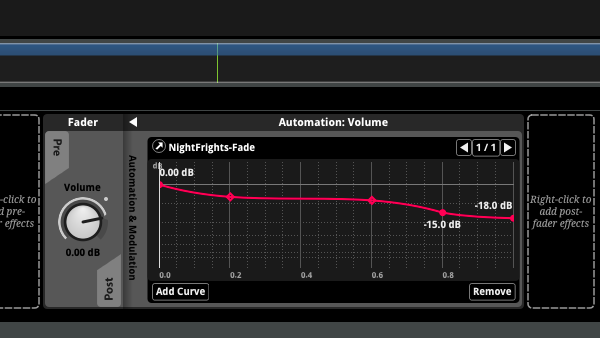

FMOD's automation control allows me to shape the volume curve. In this case, I have independent values for the percussion, which fades from 0.00db to -18db and the keys, which fade from 0.00db to -22db.

Seek speed is another handy control in FMOD. It applies a dampening property to the fade. When Unity activates the fade parameter moving the value from 0 to 1, the resulting transition between values happens over a specific time interval. I've set the seek speed value to produce a long slow fade that reduces the volume of the affected stems over 26 seconds, just long enough for the player to return to the plane. If this takes longer, the music can loop until then.

Section 4

The final section is just one chord, a Dm9 played between the piano and synth.

As Kai enters the plane, FMOD transitions to the chord. The synth arpeggio from section three fades underneath, bringing Night Frights to a close.

As you can see, there are many details to consider when using adaptive music in video games. But composing music this way is so much fun, and the feeling of playing a completed scene after all the hard work is pure satisfaction!

Full Steam Ahead

With the latest update to the AI Companion System complete, we are forging ahead with development. There is still much to do and lots of music to write before Arctic Awakening is ready for you to play. In the meantime, look out for the updates on Discord, Twitter and the newsletter!